Every element on your landing page, headline, button color, image placement, form length, affects whether a visitor becomes a client or bounces. The question is how you figure out which combination actually converts. That’s where the debate of multivariate testing vs A/B testing comes in. Both methods help you optimize, but they work differently, answer different questions, and suit different stages of your marketing funnel.

At Client Factory, we build and optimize client acquisition funnels for service businesses and law firms. Testing is central to what we do. We’ve run thousands of experiments across paid ads, landing pages, and conversion flows, and choosing the right testing method matters just as much as running the test itself. Pick the wrong one, and you’ll either waste time or miss insights that could significantly lift your conversion rate.

This article breaks down the core differences between A/B testing and multivariate testing, explains when each method makes sense, and gives you a practical framework for deciding which to use. By the end, you’ll know exactly which approach fits your optimization goals, and how to avoid common mistakes that burn through budget without delivering clear answers.

Why choosing the right test method matters

Choosing between multivariate testing vs A/B testing without understanding each method’s requirements is one of the most expensive mistakes in conversion optimization. Both approaches help you improve page performance, but they operate on different assumptions, require different traffic volumes, and answer fundamentally different questions. Picking the wrong method means you either run out of data before reaching statistical significance, or you oversimplify your experiment and miss the real driver behind your conversion rate.

The method you choose shapes the quality of the insight you get, not just the speed at which you get it.

When sample size becomes your hard constraint

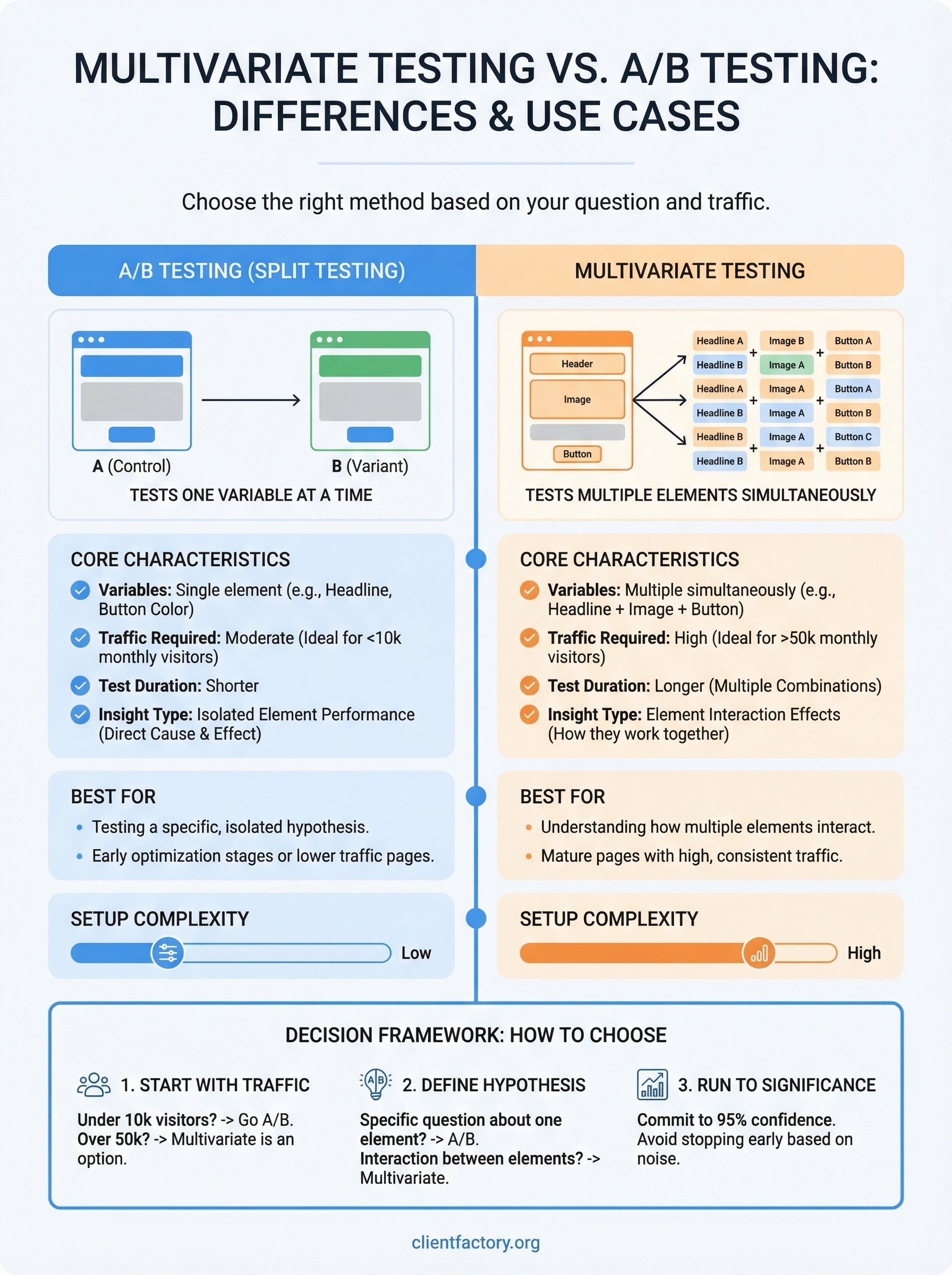

Traffic is the limiting factor in almost every test you run. A/B tests split your audience between two page versions, so you need a moderate volume of visitors to reach reliable results. Multivariate tests split traffic across every possible combination of elements. If you test three page elements with two variations each, you now have eight distinct combinations competing for the same traffic pool. On a low-traffic page, reaching significance for every combination could take months.

Running a test past its natural window creates a separate problem. Seasonality, ad spend fluctuations, and audience behavior shifts can corrupt long-running tests. Starting with a method your current traffic volume can actually support is not optional; it directly determines whether your results are actionable or misleading.

How the wrong method distorts your conclusions

Running a simple A/B test when your actual problem involves element interaction can send you in the wrong direction entirely. Suppose you change a headline and a hero image together inside one A/B variant. If that variant wins, you have no idea whether the headline drove the lift, the image did, or the two worked together. You then apply that “winning” change across your funnel and see inconsistent results because you never isolated the true cause.

Multivariate testing solves interaction blindness, but introduces its own risk. Add too many variables, call a test early, or misread partial data, and you mistake statistical noise for a genuine insight. Both errors lead to the same outcome: you invest budget optimizing toward something that does not actually move revenue. Knowing which method fits your situation before you start protects your traffic, your time, and your testing budget from getting burned on inconclusive experiments.

A/B testing explained

A/B testing, also called split testing, is the most direct form of controlled experimentation available to marketers. You create two versions of a page or element, label them A (control) and B (variant), then split your incoming traffic evenly between them. Whichever version produces a statistically significant lift in your target metric wins, and you roll it out.

How A/B tests work

You change one element between the control and the variant. That single-variable structure is what makes A/B testing powerful for diagnosing specific problems. If your call-to-action button copy changes from “Learn More” to “Get a Free Quote” and conversions go up, you know exactly why. The test gives you a clean, direct cause-and-effect answer because nothing else changed between the two versions.

A/B testing is most valuable when you have a clear hypothesis about one specific element driving a conversion problem.

Running a valid A/B test requires three things: a clear hypothesis, a defined success metric, and enough traffic to reach statistical significance before you call the result. Most practitioners aim for 95% confidence before acting on results. Stopping a test early because early numbers look good is one of the fastest ways to act on false data and make changes that hurt performance.

When A/B testing fits your situation

In the context of multivariate testing vs A/B testing, A/B testing fits when you have a specific, isolated question: does this headline outperform the current one? You do not need massive traffic volumes, and results are far easier to interpret than those from more complex experiments. If your page is early in its optimization lifecycle or your monthly visitor count sits below 10,000, starting with A/B tests gives you reliable, actionable data without overcomplicating the process.

Multivariate testing explained

Multivariate testing takes the controlled experiment further by testing multiple elements simultaneously on the same page. Rather than isolating one variable, you define several elements you want to test, such as a headline, a subheadline, and a button, create multiple variations for each, and then let the test engine serve every possible combination to your traffic. Each combination is treated as its own variant, and the test identifies not just which elements perform best independently, but how those elements interact with each other to influence conversions.

How multivariate tests work

The math behind multivariate testing grows fast. Test two variations of three separate elements and you have eight combinations running at the same time. Every combination needs enough traffic to produce statistically reliable results, which is why this method demands a significantly higher monthly visitor count than a simple A/B test. The testing platform tracks performance across all combinations and surfaces which specific arrangement of elements drives the highest conversion rate.

Multivariate testing reveals interaction effects that A/B testing structurally cannot detect.

This interaction data is the real value. You might find that headline A combined with image B outperforms every other combination, even though image B performs below average when paired with headline B. Without multivariate testing, that insight stays completely hidden.

When multivariate testing fits your situation

In the comparison of multivariate testing vs a/b testing, multivariate testing fits when you already have a high-traffic page and want to understand how multiple elements work together, not in isolation. If your page receives at least 50,000 monthly visitors and you have a defined set of elements that you suspect are interacting to affect conversions, multivariate testing gives you a level of precision that no A/B test can match.

Key differences that change your decision

When you stack multivariate testing vs A/B testing side by side, the differences go beyond how many elements you test. They affect how long your test runs, what you learn from the data, and how much traffic you need before results become trustworthy. The table below maps the core differences you need to factor into your decision.

| Factor | A/B Testing | Multivariate Testing |

|---|---|---|

| Variables tested | One at a time | Multiple simultaneously |

| Traffic required | Moderate | High (50,000+ monthly visitors) |

| Test duration | Shorter | Longer |

| Insight type | Isolated element performance | Element interaction effects |

| Setup complexity | Low | High |

Traffic volume and test duration

Traffic is where the practical gap becomes impossible to ignore. A/B tests require far less traffic because you only split your audience between two versions. Multivariate tests divide that same pool across every element combination, which multiplies the sample size you need to reach statistical significance. Push a multivariate test on a low-traffic page and none of your combinations will accumulate enough data to produce a reliable winner.

Your test duration also grows in proportion to the number of combinations you create. Two variations each across three elements gives you eight combinations that all need traffic simultaneously. Testing the same elements with a simple A/B framework would require a fraction of the time and visitors to produce a confident, actionable result.

Running a multivariate test without sufficient traffic means you will wait weeks only to end up with inconclusive results you cannot act on.

Scope of insight

A/B testing gives you a direct answer about one specific change, and that clarity is its biggest strength when you have a defined hypothesis. Multivariate testing does something different: it reveals how elements interact with each other rather than how each one performs in isolation. You can discover that a specific combination of headline and image outperforms every other arrangement, even when that image looks average when paired with a different headline. Your conversion problem determines which type of insight you actually need.

How to choose and run the right test

Deciding between multivariate testing vs a/b testing comes down to three variables: your monthly traffic, the number of elements you want to test, and the specific question you need answered. Before you build any test, you need honest answers to all three inputs, because the method that ignores them burns through your traffic and produces data you cannot confidently act on.

Start with your traffic numbers

Your monthly visitor count is the first filter. If your page receives fewer than 10,000 visitors per month, run an A/B test. You do not have enough traffic to split across multiple combinations without waiting so long that seasonal shifts or ad spend changes contaminate your results. If you are above 50,000 monthly visitors and you suspect that multiple page elements are interacting to affect your conversion rate, multivariate testing becomes a viable and genuinely useful option.

The fastest way to waste a testing budget is to run a multivariate test on a page that does not have the traffic to support it.

Define your hypothesis before you build anything

A vague test produces vague results. Write your hypothesis in one sentence before you open your testing platform: “Changing [specific element] from [current state] to [new state] will increase [target metric] because [reason].” That structure forces clarity and keeps your test anchored to a measurable outcome rather than a general sense that something could perform better.

Once your test runs, let it reach statistical significance before you call a winner. Most platforms flag 95% confidence as the standard cutoff. Stopping early because early numbers look promising is how teams make expensive changes based on noise. Commit to a pre-defined sample size or end date and hold to it no matter what early data suggests.

Final takeaways

The core distinction in multivariate testing vs A/B testing comes down to what question you need answered and whether your traffic can support the method. A/B testing gives you a fast, clean answer about one specific element when you have a focused hypothesis and moderate traffic. Multivariate testing reveals how multiple elements interact and influence each other, but only when your page volume can sustain it.

Your traffic level is the first decision point, not the last. Start with A/B tests to build a baseline of optimization wins, then scale into multivariate testing once your traffic and data quality support it. Choosing the right method before you build your test protects your budget and produces results you can actually act on.

If you want a clear look at where your current funnel is losing conversions, book a free conversion audit and get a direct plan for what to test first.