You’re running ads, driving traffic, and getting clicks, but your conversion rates are underwhelming. Before you scrap the campaign and start over, there’s a smarter move: testing what you already have. If you’ve ever wondered what is split testing, the short answer is it’s a method of comparing two versions of a marketing asset to see which one performs better. The longer answer is that it’s one of the most reliable ways to improve ROI without increasing your ad spend by a single dollar.

At Client Factory, split testing is baked into everything we do for our clients. When we build acquisition funnels for service businesses and law firms, we don’t guess which headline, offer, or landing page layout will convert best, we test and let the data decide. That approach is a big part of how we turn clicks into actual clients.

This article breaks down exactly how split testing works, walks through real examples across ads, emails, and landing pages, and gives you a practical framework to run your own tests. Whether you’re optimizing a Google Ads campaign or tweaking a lead capture page, you’ll leave with a clear understanding of how to make every element of your marketing pull more weight.

Split testing vs A/B testing and multivariate tests

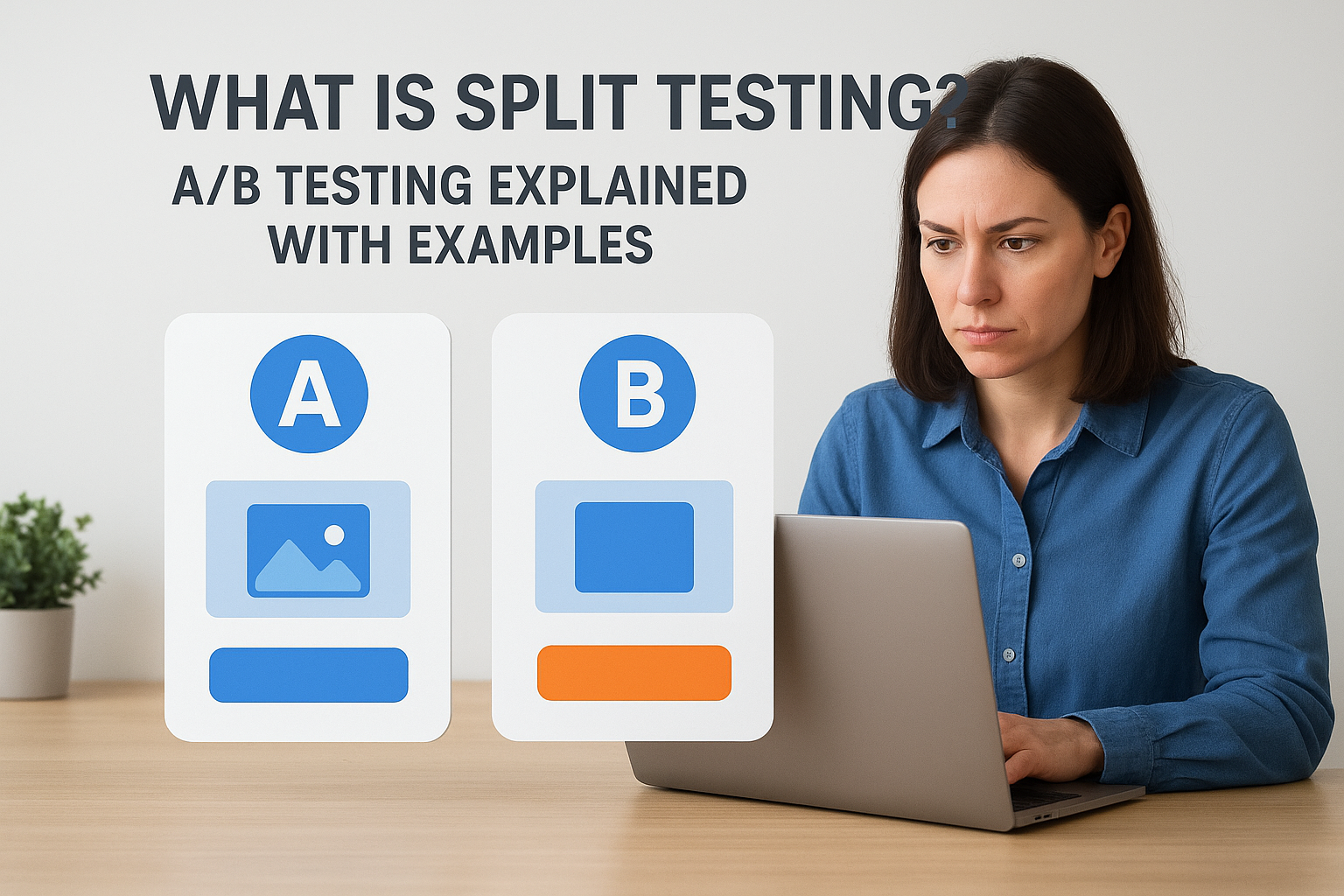

When people ask what is split testing, they’re usually asking about the same thing as A/B testing. The two terms get used interchangeably across the marketing industry, and for good reason: they describe the same core process of pitting one version of a marketing asset against another to find out which one performs better. The confusion typically starts when multivariate testing enters the conversation, because that’s a genuinely different method that often gets lumped in with the others.

Why "split testing" and "A/B testing" mean the same thing

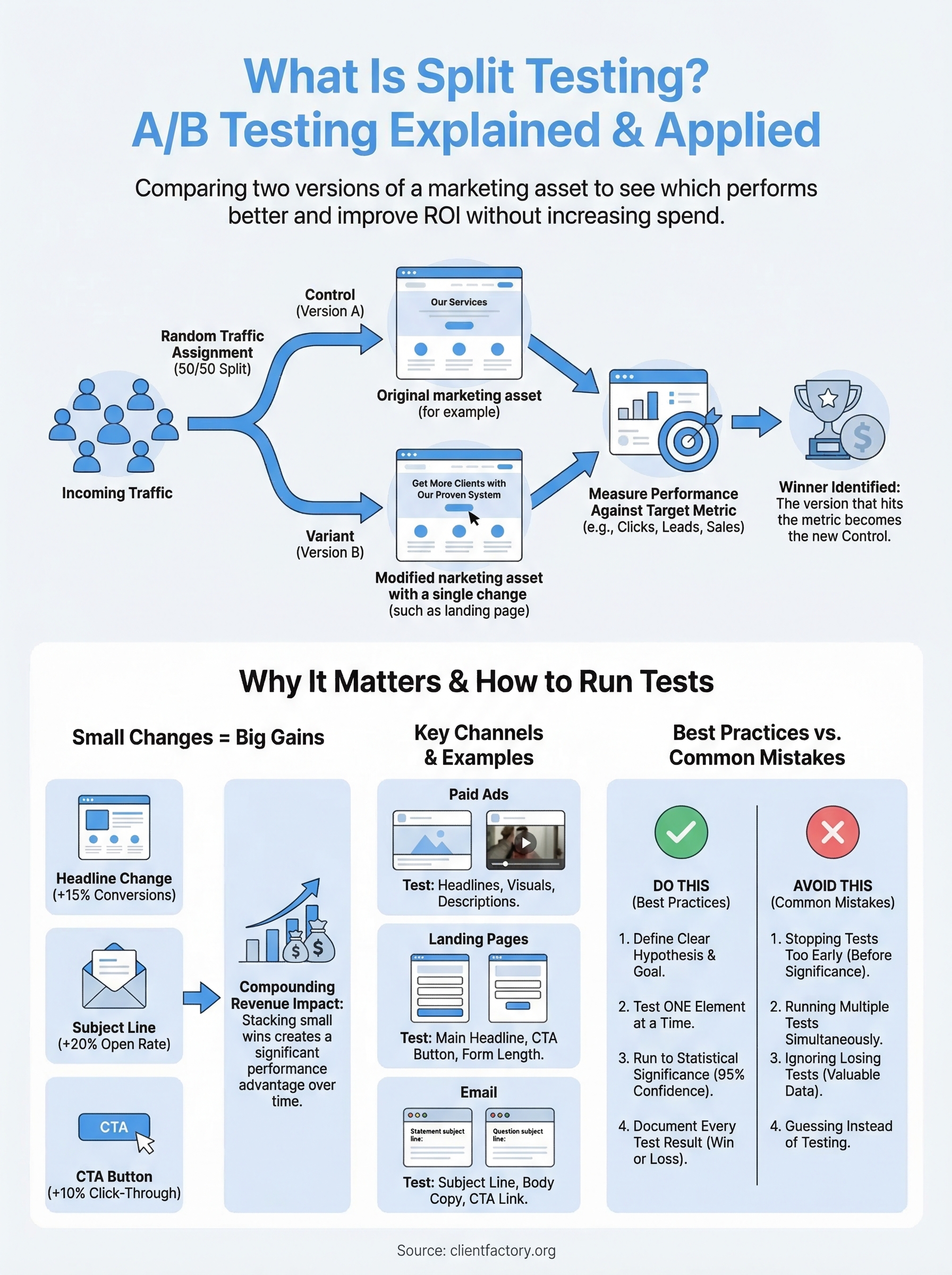

The name "split testing" comes from splitting your audience into two groups and showing each group a different version of the same page, ad, or email. Group A sees the original (called the control), and Group B sees the modified version (called the variant). At the end of the test, you compare results and pick the winner based on whichever version hit your target metric, whether that’s click-through rate, form submissions, or booked consultations.

"A/B testing" is simply another label for the same process. The "A" is your control, the "B" is your variant. Some platforms use one term, some use the other, but there’s no technical difference in methodology. Google uses both terms in its documentation when describing experiments in Google Ads and Google Analytics 4.

The label doesn’t change the logic: you isolate one variable, split your traffic, and measure the outcome.

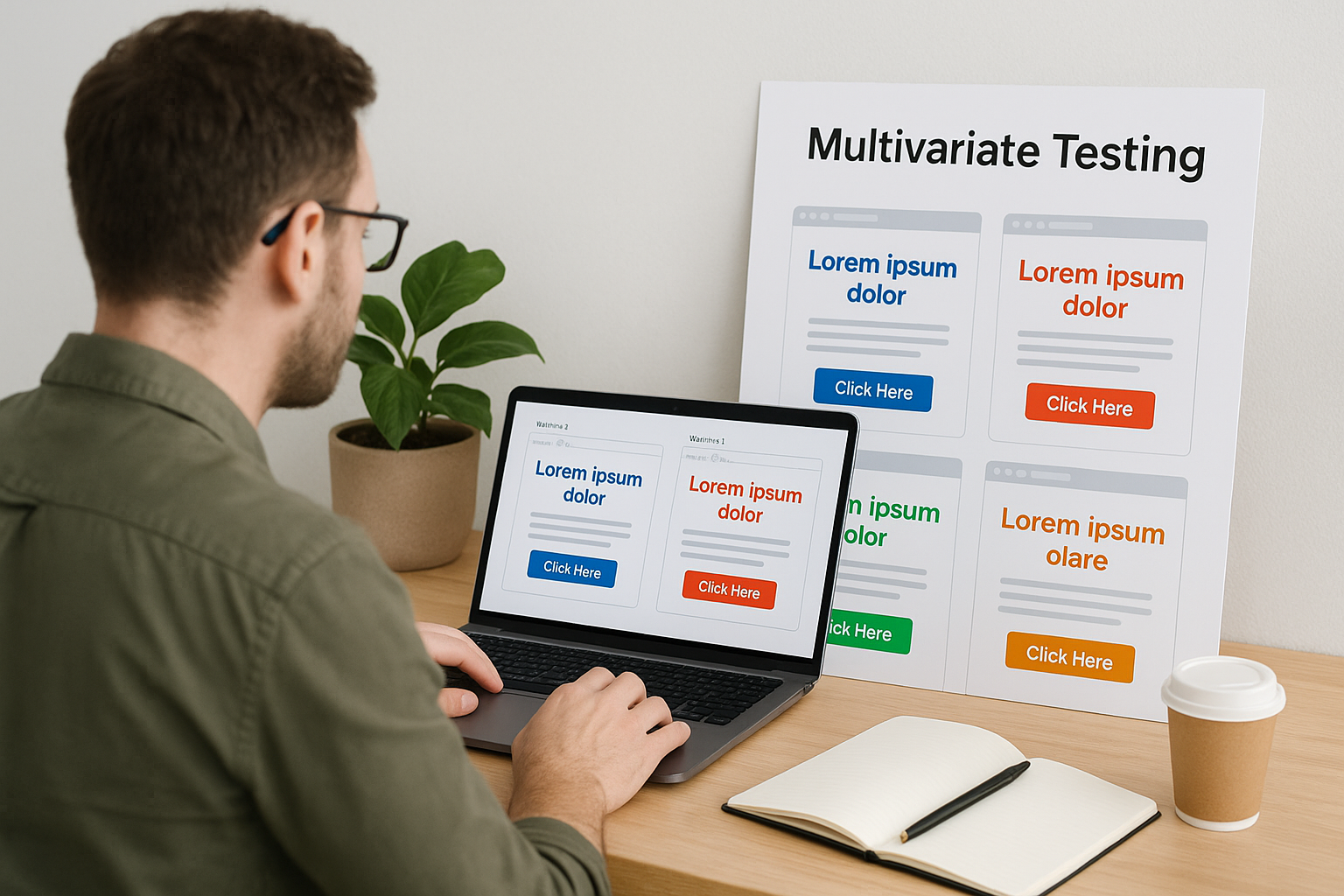

How multivariate testing differs

Multivariate testing takes the split testing concept and scales it up. Instead of testing one change at a time, you test multiple elements simultaneously. For example, you might test three different headlines combined with two different button colors on the same landing page, creating six total combinations running at once.

The practical problem is that multivariate testing requires significantly more traffic to reach statistical significance. You’re splitting your audience across more combinations, so each bucket gets a smaller slice of your total visitors. That means slower tests and a higher risk of inconclusive results if your traffic volume isn’t large enough to support it.

Results are also harder to interpret. A standard split test gives you a clear outcome: A beat B, or B beat A. With multivariate testing, you’re analyzing interaction effects between multiple elements, which demands more statistical expertise and a larger data set to draw reliable conclusions.

When to use each approach

For most businesses, especially service businesses and law firms with moderate traffic levels, split testing is the right starting point. It’s faster to set up, simpler to analyze, and produces actionable data without requiring massive traffic volume. You can learn quickly, make improvements in weeks rather than months, and build momentum.

Reserve multivariate testing for situations where you have high traffic and a clear hypothesis about how multiple elements interact. If you’re unsure which approach fits your current situation, start with A/B. The insights you gather through straightforward split tests will inform better multivariate experiments down the road once your traffic and testing maturity support that level of complexity.

Why split testing matters for marketing performance

Understanding what is split testing is one thing; understanding why it moves the needle on revenue is another. Most marketing decisions get made on instinct, past experience, or what worked for a competitor. The problem is that your audience, offer, and funnel are unique, and what works for someone else may underperform for you. Split testing replaces guesswork with evidence, giving you a direct line between a specific change and a measurable outcome.

Small changes produce measurable revenue gains

A single winning test can have a compounding effect on your bottom line. Changing a headline on a landing page from generic to benefit-driven might lift conversions by 15%. That improvement carries through every dollar you spend driving traffic to that page. Multiply that across your email sequences, ad creative, and call-to-action buttons, and you’re looking at a significantly higher return from the same budget.

The cumulative effect of consistent split testing is what separates businesses that scale from those that plateau.

Here’s a simple way to see the math in action:

| Element Tested | Lift | Impact |

|---|---|---|

| Landing page headline | +15% conversions | More leads per dollar spent |

| Email subject line | +20% open rate | More clicks without extra sends |

| CTA button copy | +10% click-through | Better funnel flow |

Stacking small wins across multiple touchpoints compounds into a meaningful performance advantage over time. Each improvement builds on the last, and your funnel gets stronger with every test you complete.

The cost of guessing

Every week you run an untested ad or leave a landing page unchanged, you’re accepting your current conversion rate as the ceiling. That’s a real cost. If your page converts at 3% and a tested version could convert at 4.5%, every 1,000 visitors represents 15 missed leads. Over a full campaign, that gap becomes impossible to ignore.

Guessing also means you can’t learn systematically. One failed campaign teaches you nothing if you don’t know which element caused the drop. Split testing gives you the diagnostic layer that turns both wins and losses into actionable intelligence you can apply to your next campaign.

How split testing works behind the scenes

When you dig into what is split testing at a mechanical level, the process is more straightforward than most people expect. At its core, a split test works by using random traffic assignment to send visitors to one version or another without any bias. That randomization is critical because it prevents user behavior patterns, like time of day or device type, from skewing your results.

Traffic splitting and randomization

When a visitor lands on your page or opens your email, the testing platform automatically assigns them to a group using a randomization algorithm. Roughly half go to the control (version A), and the other half go to the variant (version B). This happens at the session level, meaning the same visitor consistently sees the same version throughout their session rather than seeing a different one each time they return. Consistent exposure keeps your data clean and prevents users from experiencing a confusing mix of both versions.

Most platforms handle this assignment through a combination of cookies and URL parameters. When a visitor lands, the system drops a cookie in their browser tagging them as an "A" or "B" user. That tag persists across their session so the experience stays consistent. On the back end, both versions run on the same URL, so you’re not splitting traffic across two separate pages or ad destinations.

How significance is measured

Running the test is only half the job. Statistical significance is what tells you whether the difference you’re seeing between version A and version B is real or just random noise. Most testing tools calculate this automatically using a p-value, which measures the probability that your result happened by chance.

A standard threshold for declaring a winner is 95% confidence, meaning there’s only a 5% chance the result is a fluke.

Your sample size directly affects how quickly you reach significance. More traffic means faster, more reliable conclusions. Running a test on low-traffic pages for only a few days is a common error that leads to calling winners prematurely. You need enough data points for the results to hold up before you make permanent changes to your funnel.

How to run a split test step by step

Now that you understand what is split testing and how it works mechanically, you need a repeatable process for running tests that produce reliable, actionable data. Skipping steps or rushing the setup is the fastest way to generate results you can’t trust. Follow this sequence on every test, and you’ll build a consistent habit that pays off across your entire funnel.

Define your goal and hypothesis

Before you touch any testing platform, identify the single metric you want to move. That could be landing page conversion rate, email click-through rate, or ad click-through rate. One metric per test keeps the analysis clean. Then write a hypothesis in plain language: "Changing the CTA button from ‘Submit’ to ‘Get My Free Audit’ will increase form submissions because it communicates specific value instead of a generic action."

A clear hypothesis forces you to think through the reasoning behind the change before you run it, which makes your results far more useful whether the test wins or loses.

Set up your test and run it

Once your hypothesis is in place, build both versions of your asset. Change only the element your hypothesis describes. If you’re testing a headline, leave everything else, layout, images, and button copy, identical between both versions. Set your traffic split to 50/50 so both versions collect data at the same rate.

Run your test for a minimum of two full weeks, or until each version has accumulated at least 100 conversions, whichever comes later.

Cutting the test short is the most common mistake at this stage. Early results fluctuate, and declaring a winner before reaching statistical significance means you’re making permanent funnel decisions based on noise.

Read the results and act on them

When your testing platform signals statistical significance at 95% confidence or higher, you’re ready to call the result. If the variant wins, implement it as your new control and document what you learned. If it loses, the hypothesis was wrong, and that’s still valuable information about your audience’s actual behavior.

Log every test you run, including the hypothesis, the result, and the core insight it produced. That record becomes a strategic reference point as you scale your testing program across more channels and funnel stages.

What to test and examples by channel

Once you understand what is split testing in principle, the next question is where to apply it first. Your biggest conversion gaps should guide that decision. Start by identifying which channel drives the most traffic or costs the most money, then focus your first tests there for the most immediate impact.

Paid ads

Your ad creative is the first thing a potential client sees, so it’s a logical starting point. On Google Search Ads, test your headlines and descriptions. A headline that leads with a client pain point often outperforms a generic service statement because it creates immediate relevance for the reader.

On Facebook and YouTube, the visual element carries the most weight. Test a static image against a short video clip, or test a direct-to-camera testimonial against a service walkthrough. Small creative shifts at the top of your funnel can dramatically reduce your cost per lead without changing your budget.

Landing pages

Your landing page is where clicks become leads, which makes it one of the highest-leverage places to run tests. Start with your headline. It’s the first element visitors read, and it has more influence over whether someone stays or bounces than any other single element on the page.

A single headline change on a landing page can move your conversion rate more than any other individual element.

After the headline, test your form length and CTA button copy. A three-field form typically converts at a higher rate than a longer one, but the lead quality may differ. That tradeoff is worth testing in your specific context rather than assuming a universal rule applies to your audience.

Email gives you one of the fastest feedback loops in marketing because results surface within 24 to 48 hours of sending. Start with your subject line since it controls whether people open the email at all. A direct question in the subject line tends to pull better open rates than a declarative statement.

Once open rates improve, shift your focus to body copy and your CTA link. Test a single-focus email with one clear link against a version with multiple links to see which format drives more conversions to your intended destination.

Best practices and common mistakes to avoid

Knowing what is split testing mechanically is only part of the equation. How you run your tests determines whether you collect reliable data or misleading noise, and the difference between the two often comes down to a handful of habits that separate disciplined testers from those who waste time on inconclusive experiments.

Practices that produce reliable results

Consistency is the foundation of every trustworthy test. Keep your external conditions as stable as possible while the test runs. Avoid running tests during unusual traffic spikes, major holidays, or periods when your ad budget is actively changing, because those factors introduce variables you cannot control or account for in your results.

The only variable that should change between your control and variant is the single element your hypothesis describes.

Test one element at a time. If you change the headline, button color, and hero image simultaneously, you won’t know which change drove the outcome. Document every test you run, including what you changed, your hypothesis, the result, and the core takeaway. That log becomes a compounding strategic asset as your testing program grows across channels and funnel stages.

Mistakes that cost you accurate data

Calling a test too early is the single most common error. It’s tempting to stop after a few days when one version shows a clear lead, but early data fluctuates heavily. A variant that looks like a winner on day three may fully reverse by day ten once your complete audience distribution has been captured and seasonal patterns even out.

Running too many tests at the same time is another problem marketers frequently overlook. Overlapping tests on the same page or same audience segment can cause your traffic splits to interfere with each other, contaminating the data from both experiments. Run one test per funnel stage at a time, and wait for a statistically significant result before launching the next experiment.

Never discard a losing test without reading it carefully. A variant that underperforms your control still tells you something concrete about your audience’s behavior and preferences. That information directly sharpens your next hypothesis, making each round of testing more targeted and more likely to produce a meaningful lift.

Next steps

Now you have a complete picture of what is split testing and how to apply it across every major channel in your marketing funnel. The most important move from here is identifying one element in your current funnel, writing a clear hypothesis, and launching your first test this week. You don’t need a large budget or a complex testing platform to start generating useful, reliable data.

Each test you complete adds to a growing knowledge base about your specific audience, and that knowledge compounds over time into a funnel that consistently converts more visitors into paying clients. Small, consistent improvements across your ads, landing pages, and email campaigns stack into a meaningful revenue advantage that widens with every test cycle. If you want experienced eyes on your current setup to identify where to start, book a free conversion audit and we’ll map out the highest-impact opportunities in your funnel.